Connecting to Databricks (Deprecated)

The CloudZero Databricks Adaptor

The CloudZero Databricks adaptor is containerized software that can be run from any container hosting platform. The adaptor will pull your Databricks spend data, convert it to the CloudZero (Common Bill Format (CBF)), and create the data drop that can then be ingested and processed by the CloudZero platform.

This method is deprecatedThis version of the Databricks Adaptor is no longer supported. If you are looking to bring Databricks spend into CloudZero please refer to Connection to Databricks (Tier 1)

Architecture Overview

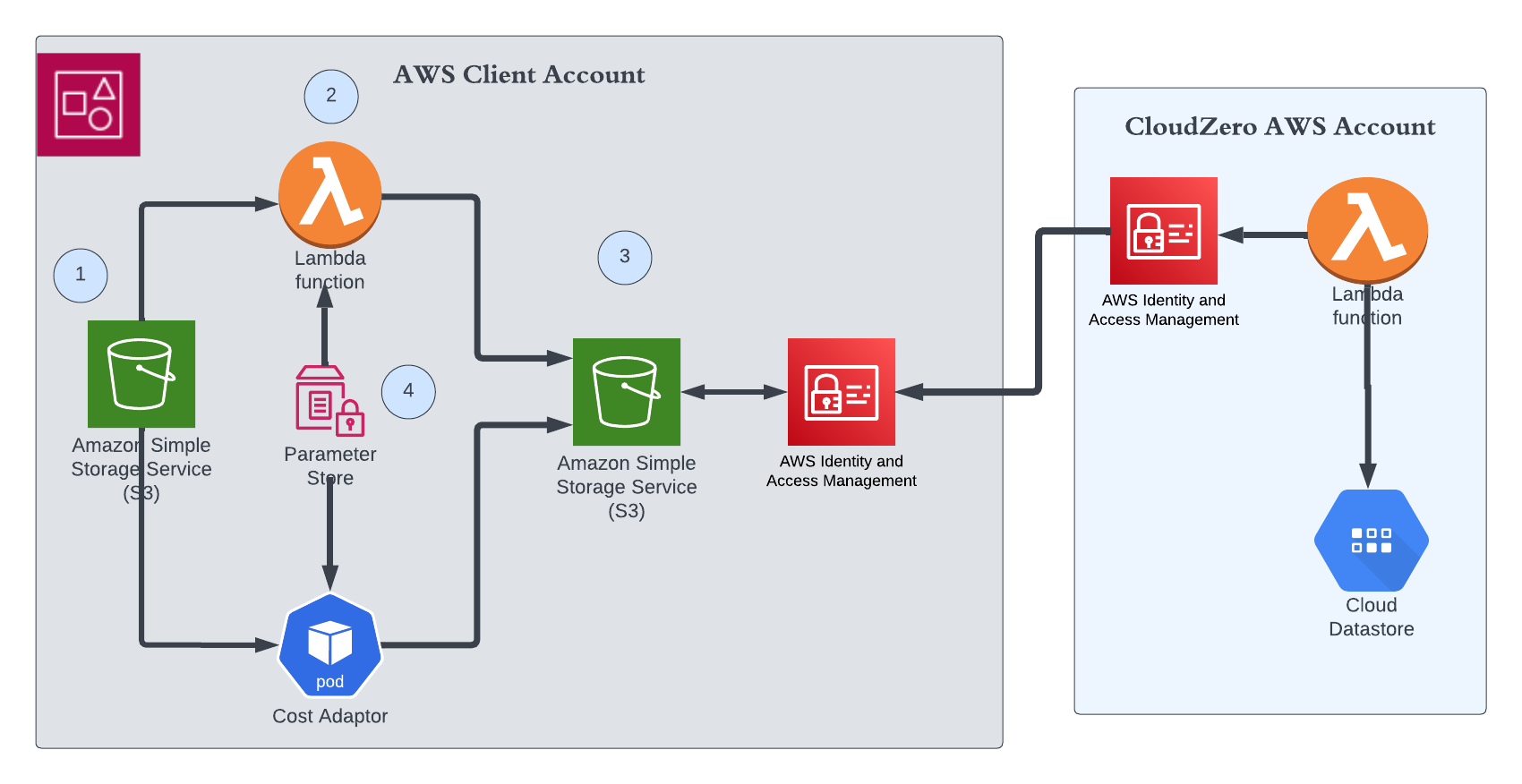

Please view the image above. In your account there are 4 items to set up.

- S3 bucket Databricks delivers usage logs.

- S3 bucket to store the results that CloudZero will read.

- The adaptor can run on one to two types of compute. Either a container in a Lambda function or a container running Kubernetes.

- Use Parameter Store to store configuration. The configuration is the same for either compute method chosen

How to setup the Databricks Adaptor

Step 1: Determine run time Environment

The Adaptor can be run as a container inside Lambda or as a container in Kubernetes on EKS or native Kubernetes. CloudZero publishes two containers depending on your choice of runtime environment.

- Lambda - docker pull ghcr.io/cloudzero/cloudzero-cost-adaptor-databricks-lambda:latest

- Kubernetes - docker pull ghcr.io/cloudzero/cloudzero-cost-adaptor-databricks-main:latest

Step 2: Setup your billable usage logs delivery

In order for the adaptor to be able to access the data it needs from your Databricks instance, you will need to configure your billable usage logs delivery.

Refer to the Databricks billable usage logs delivery documentation to export your logs into the appropriate S3 bucket for your adaptor to consume and convert to the CloudZero format.

Please NoteYou need two buckets. The billable usage logs delivery should be a different S3 bucket than the one you are configuring to store your adaptor data exports.

Step 3: Setup your S3 bucket to output spend data

You will need to setup an S3 bucket where your adaptor will drop its files, and where the CloudZero platform will pull them.

Create an AWS S3 bucket where the files will be stored.

Step 4: Setup the AWS Parameter Store

Your adaptor config settings will be using the AWS System Manager Parameter Store.

Setup a Parameter Store for your CloudZero Databricks adaptor configuration values. You will need to add the parameters in the table below. You only need to set the values that pertain to your account type is DataBricks. i.e. Enterprise versus Standard You can use the encrypted storage option for any of the values as necessary.

Please Note: When adding these parameters, ensure they are all located on the same path, such as /CloudZero/Databricks_Adaptor/, and make note of that path for use later in the setup process.

| Configuration | Description |

|---|---|

ENTERPRISE_ALL_PURPOSE_COMPUTE | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_ALL_PURPOSE_COMPUTE.DLT | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_ALL_PURPOSE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_ADVANCED_COMPUTE | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_ADVANCED_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_CORE_COMPUTE | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_CORE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_PRO_COMPUTE | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

ENTERPRISE_DLT_PRO_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_COMPUTE | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_LIGHT_COMPUTE | This should match your Databricks pricing model. defaults to 0.13 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.47 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_CANADA | This should match your Databricks pricing model. defaults to 0.47 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.50 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.59 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.45 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.45 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.47 Override by setting your value in Parameter Store. |

ENTERPRISE_JOBS_SERVERLESS_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.45 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_CANADA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.11 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_MODEL_TRAINING_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_CANADA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_CANADA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_REAL_TIME_INFERENCE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.77 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.88 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.95 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 1.00 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.09 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

ENTERPRISE_SERVERLESS_SQL_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.22 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.61 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.69 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_CANADA | This should match your Databricks pricing model. defaults to 0.62 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

ENTERPRISE_SQL_PRO_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

MCT_INTERNAL_RND_USAGE | This should match your Databricks pricing model. defaults to 1.00 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.98 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.98 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.98 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.98 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.98 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_CANADA | This should match your Databricks pricing model. defaults to 0.75 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.90 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.90 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.90 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.90 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.28 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.75 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.75 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.75 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_HERO_RESERVATION_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.75 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_IN_CUSTOMER_TENANCY | This should match your Databricks pricing model. defaults to 0.40 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_CANADA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.11 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_ON_DEMAND_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_CANADA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.66 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.66 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.66 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.66 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.94 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

MCT_MODEL_TRAINING_RESERVATION_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_ALL_PURPOSE_COMPUTE | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_ALL_PURPOSE_COMPUTE.DLT | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_ALL_PURPOSE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_DLT_ADVANCED_COMPUTE | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

PREMIUM_DLT_ADVANCED_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

PREMIUM_DLT_CORE_COMPUTE | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

PREMIUM_DLT_CORE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

PREMIUM_DLT_PRO_COMPUTE | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

PREMIUM_DLT_PRO_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_COMPUTE | This should match your Databricks pricing model. defaults to 0.15 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.15 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_LIGHT_COMPUTE | This should match your Databricks pricing model. defaults to 0.10 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.37 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_CANADA | This should match your Databricks pricing model. defaults to 0.37 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.39 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.46 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.35 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.35 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.37 Override by setting your value in Parameter Store. |

PREMIUM_JOBS_SERVERLESS_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.35 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_CANADA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.11 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

PREMIUM_MODEL_TRAINING_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.65 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_CANADA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_CANADA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.11 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_LAUNCH_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.11 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.08 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_REAL_TIME_INFERENCE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.77 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.88 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.95 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 1.00 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.91 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 1.09 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

PREMIUM_SERVERLESS_SQL_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.70 Override by setting your value in Parameter Store. |

PREMIUM_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.22 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_AP_MUMBAI | This should match your Databricks pricing model. defaults to 0.61 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_AP_SEOUL | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_AP_SINGAPORE | This should match your Databricks pricing model. defaults to 0.69 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_AP_SYDNEY | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_AP_TOKYO | This should match your Databricks pricing model. defaults to 0.78 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_CANADA | This should match your Databricks pricing model. defaults to 0.62 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_EUROPE_FRANCE | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_EUROPE_FRANKFURT | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_EUROPE_IRELAND | This should match your Databricks pricing model. defaults to 0.72 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_EUROPE_LONDON | This should match your Databricks pricing model. defaults to 0.74 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_SA_BRAZIL | This should match your Databricks pricing model. defaults to 0.85 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_US_EAST_N_VIRGINIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_US_EAST_OHIO | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_US_WEST_CALIFORNIA | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

PREMIUM_SQL_PRO_COMPUTE_US_WEST_OREGON | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

STANDARD_ALL_PURPOSE_COMPUTE | This should match your Databricks pricing model. defaults to 0.40 Override by setting your value in Parameter Store. |

STANDARD_ALL_PURPOSE_COMPUTE.DLT | This should match your Databricks pricing model. defaults to 0.40 Override by setting your value in Parameter Store. |

STANDARD_ALL_PURPOSE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.40 Override by setting your value in Parameter Store. |

STANDARD_DLT_ADVANCED_COMPUTE | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

STANDARD_DLT_ADVANCED_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.36 Override by setting your value in Parameter Store. |

STANDARD_DLT_CORE_COMPUTE | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

STANDARD_DLT_CORE_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.20 Override by setting your value in Parameter Store. |

STANDARD_DLT_PRO_COMPUTE | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

STANDARD_DLT_PRO_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.25 Override by setting your value in Parameter Store. |

STANDARD_JOBS_COMPUTE | This should match your Databricks pricing model. defaults to 0.10 Override by setting your value in Parameter Store. |

STANDARD_JOBS_COMPUTE.PHOTON | This should match your Databricks pricing model. defaults to 0.10 Override by setting your value in Parameter Store. |

STANDARD_JOBS_LIGHT_COMPUTE | This should match your Databricks pricing model. defaults to 0.07 Override by setting your value in Parameter Store. |

STANDARD_SERVERLESS_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.55 Override by setting your value in Parameter Store. |

STANDARD_SQL_COMPUTE | This should match your Databricks pricing model. defaults to 0.22 Override by setting your value in Parameter Store. |

Set up your Compute of choice

Your can run a container in Lambda or a container in Kubernetes.

Step 5 - Lambda

Step 5.1: Setup your ECR Storage

` You will need to pull the CloudZero image into your own ECR instance. To do this, follow the steps below.

- Upload and register the CloudZero Databricks Adaptor for Lambda container to your AWS ECR storage. For more information, refer to the Push your image to Amazon Elastic Container Registry section of this AWS document.

- Copy the URI of the ECR container instance you created for use in later setup. `

Step 5.2: Create and configure your AWS Lambda

You need to create and configure the Lambda function that will execute the adaptor from your ECR instance.

To do this, follow the steps below.

- Create an AWS Lambda using the Container Image template.

- Input the URI of your ECR instance created in Step 4.1.

- By default, the Lambda creation process will create a default execution role. We will use this role, so note its ID.

- In the Configuration tab of your Lambda settings, edit your General Configuration section.

- Set your

Timeoutvalue to 15 minutes andMemoryto 4GB. - Edit your Environment Variables section.

- Add the variable

DATABRICKS_USAGE_LOGS_S3_BUCKETset to the name of the S3 bucker you configure to store your databricks use logs (i.e.,/Databricks_Usage_Logs/). - Add the variable

S3_BUCKETset to the name of the S3 bucket configure to store the results. Be sure to include leading and trailing slashes (i.e.,/Databricks_Adaptor_Results/). - Add the variable

S3_BUCKET_FOLDERa folder/object path to store the results in. (i.e.,Pord/). - Add the variable

SSM_PARAMETER_STORE_FOLDER_PATHwith the value of the location of your configuration variables in AWS Parameter Store. Be sure to include leading and trailing slashes (i.e.,/CloudZero/Databricks_Adaptor/). - Access the execution role auto created with the Lambda, and add the following policies.

"ssm:GetParametersByPath",

"ssm:GetParameters",

"ssm:GetParameter",

"kms:Decrypt"Step 5.3: Permissions to write to S3

- Grant the Lambda execution role that was auto created full access rights to this S3 bucket. For more information, see the AWS S3 User Policy Examples documentation.

Step 5.4: Schedule your Databricks adaptor

Once all the parts are in place, follow the steps below to setup the run schedule of your Databricks Adaptor.

- Access your Lambda settings, and select the Add Trigger button.

- Choose the EventBridge (CloudWatch Events) trigger type, and select Create New Rule.

- Set the Schedule Expression to run on a regular cadence. We recommend coordinating this run on the same cadence as your Databricks Billable usage logs delivery for optimal efficiency.

- Once added, your Lambda will begin executing at the set time, and you will begin seeing data drops in the S3 bucket you configured.

Step 5 - Container

Step 5.1: Execution Environment

Stetting up and configuring a cluster is left to the user as everyone handle this differently. Below is what could be run if running the container one time. You might want to run the container as a Cron Job or on some other schedule.

The container can be run one time like below:

docker run \ -e DATABRICKS_USAGE_LOGS_S3_BUCKET=cloudzero-kevin-cost-adaptor-databricks \ -e S3_BUCKET=cloudzero-kevin-cost-adaptor-databricks \ -e S3_BUCKET_FOLDER=output-to-cloudzero/ \ -e SSM_PARAMETER_STORE_FOLDER_PATH=/databricks-adaptor-test/ \ -e AWS_DEFAULT_REGION=us-east-1 \ ghcr.io/cloudzero/cloudzero-cost-adaptor-databricks-main:latest

Step 5.2: Permissions

The Pod will need read and write permission to the two S3 buckets and to the parameter store. One way to achieve this is by grating permission to the Node role that container will run. Or, one can create a service role for the pod running the container.

Once Again, this directions are generic as each user will have a slightly different way to handle executing the container and granting it permissions.

Step 6: Create a CloudZero billing connection

Once your files are successfully dropping to your S3 bucket, you will need to setup a CloudZero custom connection to begin ingesting this data into the platform.

For more information on how to do this, see Connecting Custom Data from AnyCost Adaptors.

Updated 8 months ago